LabVIEW Artificial Intelligence: A Comprehensive Overview

Understanding Labview

Labview Basics

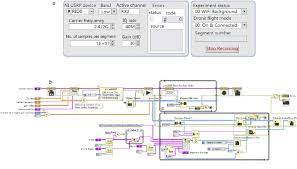

Labview is a graphical programming language and development environment used for creating applications that can interact with hardware devices and systems. It was created by National Instruments in 1986 and has since then become a widely used tool in various industries such as engineering, science, and research.

The graphical programming language used in Labview is based on the concept of dataflow programming, where the flow of data between nodes determines the execution of the program. The nodes are connected by wires that represent the data being passed between them. This makes it easy to visualize and debug programs, especially those that involve complex data processing or control systems.

Labview also comes with a wide range of built-in libraries and tools for signal processing, data acquisition, and analysis. These libraries can be used to create custom applications or to extend the functionality of existing ones.

Role of Labview in AI

Artificial Intelligence (AI) has become an increasingly important field in recent years, with applications in various industries such as healthcare, finance, and manufacturing. Labview can be used to develop AI applications by leveraging its data processing and hardware integration capabilities.

One of the key areas of AI that Labview can be used for is machine learning, where algorithms are trained on large datasets to make predictions or decisions. Labview provides a range of tools for data acquisition, signal processing, and analysis, which can be used to preprocess data before feeding it into machine learning algorithms.

Another area where Labview can be used in AI is robotics, where Labview can be used to control and monitor the behavior of robots. Labview provides a range of tools for hardware integration, which can be used to interface with sensors and actuators on robots.

Overall, Labview is a powerful tool for developing AI applications, thanks to its data processing and hardware integration capabilities. Its graphical programming language and built-in libraries make it easy to develop and debug complex applications, while its support for a wide range of hardware devices makes it a versatile tool for various industries.

AI in Labview

LabVIEW is a software platform that provides an integrated development environment (IDE) for creating applications that use graphical programming. The platform has a wide range of tools that enable developers to create applications in various domains, including artificial intelligence (AI). In this section, we will discuss AI in LabVIEW, including the AI tools and design patterns that developers can use to create AI applications.

AI Tools in Labview

LabVIEW provides several AI tools that developers can use to create AI applications. These tools include:

- The Analytics and Machine Learning Toolkit: This toolkit provides a set of machine learning algorithms and tools that enable developers to train and deploy machine learning models in their LabVIEW applications. The toolkit includes algorithms for classification, regression, clustering, and anomaly detection, among others.

- The Neural Network Toolkit: This toolkit provides a set of tools for creating, training, and deploying artificial neural networks in LabVIEW applications. The toolkit includes tools for creating feedforward, recurrent, and convolutional neural networks, among others.

- The Vision Development Module: This module provides a set of tools for creating computer vision applications in LabVIEW. The module includes tools for image processing, feature extraction, object detection, and classification, among others.

Developers can use these tools to create a wide range of AI applications, including predictive maintenance, anomaly detection, image recognition, and natural language processing, among others.

AI Design Patterns in Labview

LabVIEW provides several AI design patterns that developers can use to create AI applications. These patterns include:

- The Model-View-Controller (MVC) pattern: This pattern separates the application into three components: the model, which represents the data and business logic; the view, which represents the user interface; and the controller, which manages the interaction between the model and the view. This pattern enables developers to create scalable and maintainable applications.

- The Observer pattern: This pattern enables one object to notify other objects when its state changes. This pattern is useful in AI applications where the state of the system changes dynamically.

- The Strategy pattern: This pattern enables developers to encapsulate algorithms and make them interchangeable. This pattern is useful in AI applications where different algorithms may be used for different tasks.

Developers can use these patterns to create well-structured and maintainable AI applications that can be easily extended and modified as requirements change.

In conclusion, LabVIEW provides a wide range of AI tools and design patterns that enable developers to create AI applications in various domains. These tools and patterns enable developers to create scalable, maintainable, and extensible applications that can be easily modified and extended as requirements change.

Building AI Systems with LabVIEW

Building AI systems with LabVIEW involves several stages of development, including data acquisition, data analysis, AI model development, and system deployment. Each stage requires careful planning, implementation, and testing to ensure that the AI system performs as expected.

Data Acquisition

Data acquisition is the process of collecting data from various sources, such as sensors, cameras, or databases. In LabVIEW, data acquisition can be done using various tools, such as DAQmx, Vision Acquisition Software, or the Database Connectivity Toolkit. These tools allow users to acquire data from different types of sensors, cameras, or databases and store them in a format that can be easily analyzed.

Data Analysis

Data analysis is the process of extracting meaningful insights from the acquired data. In LabVIEW, data analysis can be done using various tools, such as the Signal Processing Toolkit, the Vision Development Module, or the DataFinder Toolkit. These tools allow users to analyze data using various techniques, such as filtering, feature extraction, or pattern recognition.

AI Model Development

AI model development is the process of building a model that can learn from the analyzed data and make predictions or decisions based on new data. In LabVIEW, AI model development can be done using various tools, such as the Machine Learning Toolkit, the Neural Network Toolkit, or the Deep Learning Toolkit. These tools allow users to build AI models using various techniques, such as supervised learning, unsupervised learning, or reinforcement learning.

System Deployment

System deployment is the process of deploying the AI system in a production environment. In LabVIEW, system deployment can be done using various tools, such as the SystemLink software, the NI InsightCM Enterprise software, or the NI VeriStand software. These tools allow users to deploy the AI system on various platforms, such as embedded systems, industrial PCs, or cloud servers.

In conclusion, building AI systems with LabVIEW requires careful planning, implementation, and testing at each stage of development. By using the various tools available in LabVIEW, users can acquire, analyze, develop, and deploy AI systems that can perform various tasks, such as predictive maintenance, anomaly detection, or quality control.

Practical Applications of AI in LabVIEW

Artificial Intelligence (AI) is a rapidly growing field that has the potential to revolutionize a wide range of industries. LabVIEW, a graphical programming language widely used in the automation industry, has been integrated with AI to develop intelligent systems capable of performing complex tasks. Here are some practical applications of AI in LabVIEW:

Industrial Automation

AI-powered LabVIEW systems are being used in industrial automation to perform tasks that were previously impossible or required human intervention. These systems can analyze large amounts of data in real-time and make decisions based on that data. For example, an AI-powered system can monitor a manufacturing line and detect defects in real-time, allowing for immediate corrective action. This not only improves product quality but also reduces downtime and costs.

Healthcare

AI-powered LabVIEW systems are also being used in healthcare to improve patient outcomes. These systems can analyze patient data and provide personalized treatment plans. For example, an AI-powered system can analyze a patient’s medical history, current condition, and genetic information to develop a personalized treatment plan. This not only improves patient outcomes but also reduces healthcare costs by reducing the need for unnecessary treatments and procedures.

Transportation

AI-powered LabVIEW systems are being used in transportation to improve safety and efficiency. These systems can analyze data from sensors and cameras to detect potential hazards and make decisions in real-time. For example, an AI-powered system can analyze camera feeds from a self-driving car and detect pedestrians, other vehicles, and road signs. This allows the car to make decisions in real-time and avoid accidents.

In conclusion, AI-powered LabVIEW systems are being used in a wide range of industries to improve efficiency, reduce costs, and improve outcomes. As AI technology continues to advance, we can expect to see even more practical applications of AI in LabVIEW in the future.

Challenges and Solutions in Labview AI

Artificial Intelligence (AI) is a rapidly growing field with a wide range of applications in various industries. LabVIEW is a popular platform for developing AI applications, but it also presents some challenges. Here are some of the major challenges and solutions in LabVIEW AI:

Challenge: Limited hardware resources

AI algorithms require significant computational power, which can be a challenge for embedded systems with limited hardware resources. LabVIEW Real-Time and LabVIEW FPGA platforms offer some solutions to this challenge. FPGAs allow the execution of AI algorithms in a power-efficient way. However, it is important to carefully choose the hardware that can handle the computational demands of the AI algorithms.

Challenge: Complex algorithms

AI algorithms can be complex and difficult to implement in LabVIEW. Deep Learning algorithms, for example, require a lot of data and can be difficult to train. To overcome this challenge, LabVIEW developers can use pre-trained models and libraries to simplify the implementation of AI algorithms.

Challenge: Data acquisition and processing

AI algorithms require large amounts of data to train and test. In LabVIEW, acquiring and processing data can be a challenge, especially when dealing with real-time data. To address this challenge, LabVIEW developers can use data acquisition hardware and software tools to simplify the process of acquiring and processing data.

Challenge: Integration with other systems

AI applications often need to be integrated with other systems, such as databases and web services. In LabVIEW, this can be a challenge due to the limited support for some protocols and interfaces. To overcome this challenge, LabVIEW developers can use third-party libraries and tools to integrate their AI applications with other systems.

Challenge: Debugging and testing

AI algorithms can be difficult to debug and test, especially when dealing with complex models and large datasets. In LabVIEW, developers can use debugging and testing tools to identify and fix errors in their AI applications. Additionally, LabVIEW developers can use simulation tools to test their AI applications before deploying them in real-world scenarios.

In conclusion, LabVIEW AI presents some challenges, but there are also solutions available to overcome them. By carefully choosing hardware, using pre-trained models and libraries, acquiring and processing data efficiently, integrating with other systems, and using debugging and testing tools, LabVIEW developers can create effective AI applications.

Future of AI in LabVIEW

LabVIEW has been a popular platform for developing scientific and engineering applications for over three decades. With the rise of artificial intelligence (AI), LabVIEW has also evolved to include AI capabilities. The future of AI in LabVIEW looks promising, with new features and technologies being developed to enhance its capabilities.

One of the key areas that LabVIEW is focusing on is machine learning. The LabVIEW Analytics and Machine Learning Toolkit is a software add-on that provides training machine learning models. These models can be used to discover patterns in large amounts of data with anomaly detection and classification, and clustering algorithms. Additionally, these models can recognize patterns in new data on NI Linux.

Another area where LabVIEW is expanding its AI capabilities is in the field of deep learning. The Deep Learning Toolkit for LabVIEW provides a comprehensive set of VIs for designing, training, and deploying deep neural networks. This toolkit enables engineers and scientists to develop and deploy deep learning models for a wide range of applications, including image recognition, speech recognition, and natural language processing.

LabVIEW is also exploring the use of AI for optimization and control applications. The Control Design and Simulation Module for LabVIEW provides tools for designing, simulating, and implementing control systems. These tools can be used to optimize the performance of complex systems, such as chemical processes or manufacturing lines, by using AI algorithms to adjust parameters in real-time.

In addition to these capabilities, LabVIEW is also exploring the use of AI for data analysis and visualization. The Data Dashboard for LabVIEW provides a platform for creating custom dashboards that can be used to monitor and analyze data in real-time. These dashboards can be enhanced with AI algorithms to provide predictive analytics, anomaly detection, and other advanced features.

Overall, the future of AI in LabVIEW looks bright, with new features and technologies being developed to enhance its capabilities. As AI continues to evolve and become more pervasive, LabVIEW is well-positioned to remain a leading platform for scientific and engineering applications.

Frequently Asked Questions

What are some applications of combining LabVIEW and AI?

Combining LabVIEW and AI can lead to the development of intelligent systems that can perform complex tasks such as image and speech recognition, predictive maintenance, and process control. Some specific applications include autonomous vehicles, medical diagnosis, and industrial automation.

How can LabVIEW be used in developing AI models?

LabVIEW provides a graphical programming environment that makes it easy to develop and test AI models. It has built-in libraries for machine learning, signal processing, and control systems, which can be used to develop AI models. Additionally, LabVIEW can interface with other programming languages such as Python and MATLAB to leverage their AI capabilities.

What are some advantages of using LabVIEW for AI development?

One of the main advantages of using LabVIEW for AI development is its graphical programming environment, which makes it easy to visualize and debug the code. Additionally, LabVIEW has built-in libraries for machine learning, signal processing, and control systems, which can save time and effort in developing AI models. LabVIEW also has a large community of users who share their code and expertise, which can be helpful in developing AI models.

What are some limitations of using LabVIEW for AI development?

One of the limitations of using LabVIEW for AI development is its learning curve. LabVIEW requires a different way of thinking compared to traditional text-based programming languages, which can be challenging for some developers. Additionally, LabVIEW may not be as flexible as other programming languages when it comes to developing custom algorithms or integrating with third-party libraries.

How does LabVIEW compare to other programming languages for AI development?

LabVIEW is a unique programming language that has its own strengths and weaknesses compared to other programming languages for AI development. One of the main strengths of LabVIEW is its graphical programming environment, which makes it easy to visualize and debug the code. Additionally, LabVIEW has built-in libraries for machine learning, signal processing, and control systems, which can save time and effort in developing AI models. However, LabVIEW may not be as flexible as other programming languages when it comes to developing custom algorithms or integrating with third-party libraries.

What are some best practices for integrating LabVIEW and AI technologies?

Some best practices for integrating LabVIEW and AI technologies include selecting the appropriate AI algorithm for the task at hand, optimizing the algorithm for performance, and validating the results. Additionally, it is important to use modular design practices and version control to ensure that the code is maintainable and scalable. Finally, it is helpful to leverage the LabVIEW community for support and expertise.